AI Transformation's True Bottleneck Is Decision Velocity, Not Technology

- Richa

- Apr 19

- 4 min read

Moment That Looks Like Progress — But Isn’t

It is 4:47 PM on a Thursday, and the AI model completed its calculation.

The results are solid: 37% savings in delivery costs within the region if three distribution centers merge and routing uses dynamic time windows. The confidence level is high.

The following Monday, the insight has been shared twice already. The meeting is set for “alignment” on Wednesday. By Friday, three more key players become part of the discussion stream. One says, “But what if it will hurt the customer experience?” Someone else states, “Why don’t we try running a test pilot?”

Six weeks later, the insight remains unimplemented. Not because there was anything wrong with the model. It’s simply because nothing was predetermined about what would come next after getting the insight.

This Is Where Most AI Strategies Quietly Break

Abstract concept algorithms, big data presentations

AI does not miss its mark due to slow models but rather due to slow organizations.

As executive teams wrangle over the number of parameters and vendor partnerships, the real limitation has moved upstream to the rate of decisions. Contemporary AI delivers recommendations on a millisecond timeframe. It typically takes weeks before an organization is able to approve, deploy, and learn from those recommendations.

Fast vs Slow Organizations Actually Look Like

Distributor that moved fast

A mid-sized logistics firm introduced AI to optimize its inventories. The AI had an accuracy rate of 89 percent. There was no initial benefit since any decision required an e-mail to their district manager. The average response time was 2.3 days.

It was not a technical issue requiring a fix.

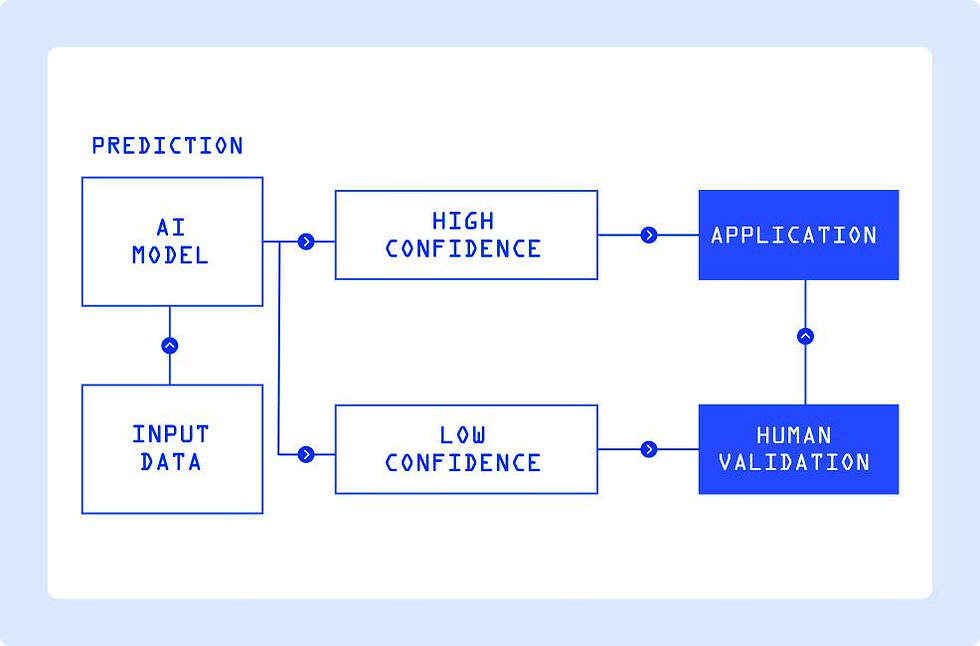

≥85% confidence and <$2.5K risk → Autopilot execution

70–84% confidence or $2.5K-$10K risk → One-click approval/rejection with canned reasons

<70% confidence or >$10K risk → Escalate to regional leader with context pack

All the above criteria were pre-approved during one 90-minute meeting. No more debate on decisions at each instance. The decision latency dropped from 14 days to less than 4 hours. Waste cut by 22 percent. It was not because the AI became smarter.

Financial firm that moved slow

A well-funded organization took 18 months to develop its artificial intelligence credit underwriting tool. The historical performance showed 94% accuracy. It was time for deployment. Yet the AI went to sleep.

Why? Each lending decision needed a risk attestation signature by three departments, audit log input, a bi-weekly compliance review, and a 20-minute override log. The model optimization was complete. But not the decision-making process. It took six months before it quietly died.

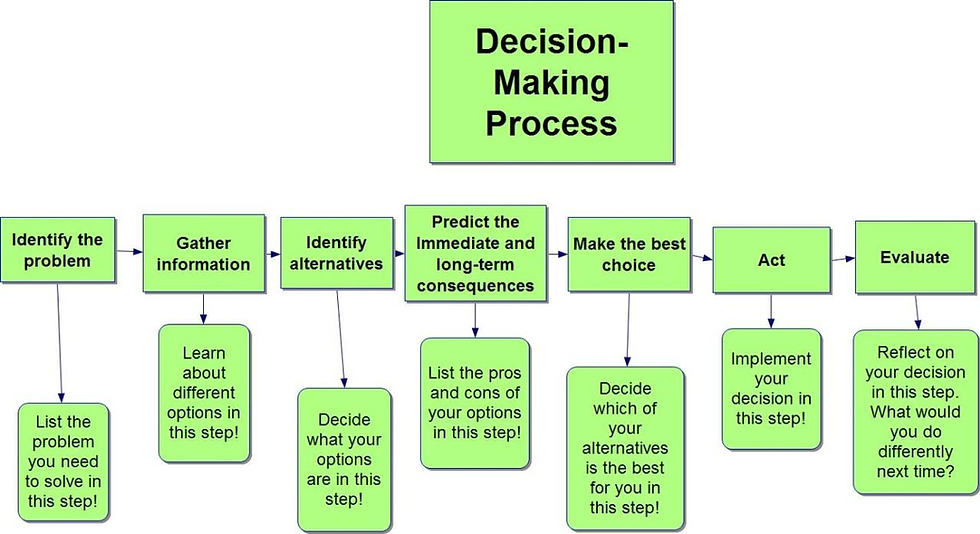

Winners not only use AI; they rethink decision-making processes altogether. They decentralize decision-making with clear limits. And rather than focusing on the accuracy of the prediction, they focus on the efficiency of the process.

Before Fixing AI, Fix the Decision Pipeline

At this point, the question isn’t whether your AI works. It’s whether your organization is designed to act on it.

Four Levers to Fix Decision Velocity (This Quarter)

You don’t need an enterprise-wide overhaul. You need to remove friction where it actually lives: the human handoff.

| |

| |

| |

|

Technology generates probability. Organizations generate decisions

The difference between the two is the bottleneck. Companies set for success over the coming decade will not be the companies with the most parameters and fastest GPUs. Rather, it will be those that realize the nature of the AI transformation lies in addressing human questions: delegation without deauthorization, scale without loss of trust, and learning faster than their competition.

Rethink decision architecture. Delegation in calculated measure. Focus on pipeline analytics rather than predictive analytics. Velocity in decision equals AI velocity. Transformation is no longer a project; it’s a multiplier.

1 Comment